A Guide for Hive Engine (HE) Automatic Snapshots (#1)

It was a busy week for me, as usual... It puzzles me that I have work for so many years. But even so, I still feel the motivation to say "let's go" on this!

Did you read my last development about HE snapshots?

If you are a Witness on Hive-Engine, then this is for you!

In sum, I have started a share on Google Drive where I set HE snapshots for anyone to use (including myself).

👉 Hive-Engine Snapshots Google Drive Share!

Looking for Automation on Snapshot Backups?

Since this Google Drive thing worked so well, manually speaking, why not automate?

This is a cheap solution quite easy to implement. But, to be achievable by 99% of you without any thoughts, I am developing a little script that makes things easier.

But before that, let me quickly go through the option google-drive-ocamlfuse (GitHub repo) I have chosen from the 3 options on this guide. This will be also a good backup, in case the guide gets deleted in the future.

I have used Ubuntu with GNOME (graphical interface) but this can also be done without it, via a redirect of the browser to another host (X exported). I am not going through that technicality here, but if you absolutely need that and don't find a way, reach out.

1st step - add Google Drive to your node

So, let's start. First, I would suggest you create a new Google Account just for this. This makes sure you have both the maximum space possible (15GB) and you don't need to worry about any of the personal information problems.

Secondly, add the new repo and install the google drive "plug-in":

sudo add-apt-repository ppa:alessandro-strada/ppa

sudo apt install google-drive-ocamlfuse

Then authenticate via browser (it pops up on the first command below) and authorize (a cached token will allow you later to mount without further permission requests) the use of your google drive. Afterward, you will be able to create a directory to mount google drive.

google-drive-ocamlfuse

mkdir -v ~/myGoogleDrive

google-drive-ocamlfuse -skiptrash ~/myGoogleDrive

Note #1: Don't use root for this please! Use it under an unprivileged user.

Note #2: Make sure you use -skiptrash to avoid deleted files falling in the trash (which does not release space in Google Drive).

Note #3: The Google Drive cached token can expire or be revoked for obvious reasons, so consider that you might need to re-authenticate in a future time. Don't assume this will be forever allowed.

hiveengine@hiveengine:~/myGoogleDrive/hive-engine-snapshots$ ls -lart

total 8658095

-r--r--r-- 1 hiveengine hiveengine 163 Mar 20 20:44 'Snapshots - Issues Report.desktop'

drwxrwxr-x 1 hiveengine hiveengine 4096 Mar 20 20:45 .

-rw-rw-r-- 1 hiveengine hiveengine 4420846597 Mar 24 00:42 hsc_2021-03-24_b52385461.archive

drwxrwxr-x 2 hiveengine hiveengine 4096 Mar 24 23:58 ..

-rw-rw-r-- 1 hiveengine hiveengine 4445032996 Mar 25 00:19 hsc_2021-03-25_b52413665.archive

hiveengine@hiveengine:~$ df -h ~/myGoogleDrive

Filesystem Size Used Avail Use% Mounted on

google-drive-ocamlfuse 15G 13G 2.5G 84% /home/hiveengine/myGoogleDrive

And this is basically it. You have your Google Drive mounted on your Ubuntu system.

2nd step - Use my script (to be released next week) to automate snapshots!

This is where my added value comes in... I have created a little script that helps you just schedule backups through crontab (for example).

I would like to stress the importance of using automation for your witness registered status here. Something like my HE-AWM script, and especially if you are going to use this one via crontab. This will ensure your witness gets unregistered during the MongoDB dump, avoiding missed blocks.

Don't worry, you will not harm the network much by being down if you are not running a very well-known RPC node, otherwise make due diligence before applying automated backups like I am going to propose.

In the future, I have plans on including this on an HA-ready script. So, make sure you stay tuned for my developments.

And because I have not yet finished this out (but made some great progress)... here it is some "soon to be released" teasing output of what is "working so far" and will run through some daily experimentation during the week, before release:

hiveengine@hiveengine:~/hive-stuff$ ./upload_archive_gdrive.sh

hsc_2021-03-25_b52439942.archive

hsc_2021-03-27_b52488220.archive

2

hsc_2021-03-25_b52413665.archive

hsc_2021-03-25_b52439942.archive

2

sending incremental file list

>f+++++++++ hsc_2021-03-27_b52488220.archive

4,506,403,787 100% 4.81MB/s 0:14:54 (xfr#1, to-chk=0/1)

Number of files: 1 (reg: 1)

Number of created files: 1 (reg: 1)

Number of deleted files: 0

Number of regular files transferred: 1

Total file size: 4,506,403,787 bytes

Total transferred file size: 4,506,403,787 bytes

Literal data: 4,506,403,787 bytes

Matched data: 0 bytes

File list size: 0

File list generation time: 0.001 seconds

File list transfer time: 0.000 seconds

Total bytes sent: 4,507,504,118

Total bytes received: 35

sent 4,507,504,118 bytes received 35 bytes 1,482,975.54 bytes/sec

total size is 4,506,403,787 speedup is 1.00

Upload Complete!

As you can see, I am using rsync for two reasons here. The first is that I can control the bandwidth of the transfer (this will be a local bandwidth that can potentially affect your VM/System IO), and secondly, because I can control way better the way I transfer files (future thinking that it will include local backups as well, not just into Google Drive).

A heads-up call that in this case, google-drive-ocamlfuse will only let the rsync return (exit code 0) when the file has been completely uploaded to Google Drive. This means that I still have to find a way to control the bandwidth which the plug-in uploads data to Google.

On my node, it had almost no impact and as you can see above, it lowered quite substantially the average transfer speed of the rsync. I have set 5 MB/s and it came down to 1.4 MB/s to Google as average. I did not verify, but I believe the transfer to Google only begins after the rsync sent all data locally.

Don't worry if you can't yet go through this...

I will make it very easy on the next post after I release the script. Hopefully, on the #2 post, this will be a no-brainer.

HE-AWM - Hive-Engine Auto Witness Monitor

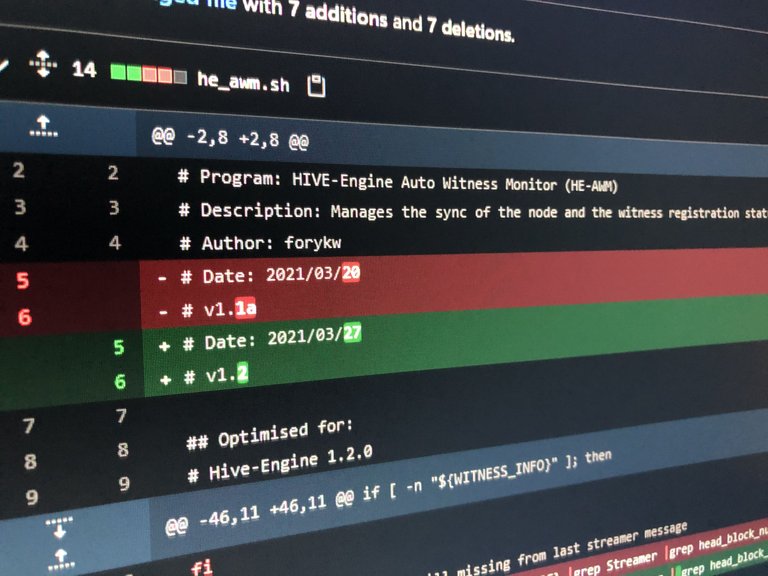

I have fixed two major problems with the previous version, but they should not affect your witness much (just on specific situations). Note, that I have never experienced missed blocks because of those bugs, although if you are a witness at the top, the frequency of blocks signed might allow for the bugs to be exploited.

If you wish, take this develop branch commit and it should fix those two problems. I will be making more changes to present next weekend along with the new auto snapshot backups script.

Meanwhile, the script will be running sort of 24/7... to validate that expected results didn't change.

Thank you for the support!

Any feedback, please let me know. I know I don't go as fast as many, but I am having fun helping out with this. So, it's both a pleasure and a professional aspiration that I can't yet full time dedicate time to. 😋

https://twitter.com/forkyishere/status/1375740086382325766

Updates:

2021/03/28 - Added GitHub repo for the google-drive-ocamlfuse. And minor text typos/updates.

Congratulations @atexoras! You have completed the following achievement on the Hive blockchain and have been rewarded with new badge(s) :

Your next payout target is 50 HP.

The unit is Hive Power equivalent because your rewards can be split into HP and HBD

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPCheck out the last post from @hivebuzz:

I wish hive-engine let us claim all the tokens..