Presenting HE-SS: Hive-Engine Snapshots Service

The long-term idea was to create a service, hence the name. It can be "a service" or just a backup solution for yourself. The important part is that it allows anyone to share snapshots (as a service) via Google Drive.

v1.0 - Snapshots Backup using Google Drive - don't use this version

v1.1 - Snapshots Backup using Google Drive + mandatory fix to unregister witness prior stopping the node

This is the promised "2nd step" of the "A Guide for Hive Engine (HE) Automatic Snapshots (#1)" that I will, later on, write a second post (hence the # numbering) to make things simpler to read and in case I manage to compare it with other alternatives.

WARNING!

I highly recommend you to use a witness node management script (like HE-AWM script) if you are planning on running backups like this via crontab. This is because you should unregister your witness while the backup is taking place, otherwise, you can miss blocks. This is also assuming you only have one node running.

👷♂️ Deployment

Very simple typical deployment. If you have not, make sure you complete the "1st step" of the "A Guide for Hive Engine (HE) Automatic Snapshots (#1)". Then, just clone the repo, edit a few variables I mention below (and via the repo README), add the entry to crontab to test all the commands before going live and that's it.

- Complete the "1st step" of the "A Guide for Hive Engine (HE) Automatic Snapshots (#1)"

- On your node, run:

git clone https://github.com/4Ykw/he-ss.git - Change into the

he-ssdirectory just created and edit the variablePM2_PROCESS_NAMEon both below scripts to be the name of your pm2 node process (you can see which one you have withpm2 listcommand):

3.1 -backup_mongodb.sh

3.2 -upload_archive_gdrive.sh - On

backup_mongodb.shscript (v1.1) you also need to specify your witness node directory in the variableWITNESS_NODE_DIR(default is~/prod/steemsmartcontracts)

4.1. (optional) I have set other variables for you to customise under "Adjust to your node" comment. They should be evident enough. - Set a crontab entry to run the

he_ss.shscript on the time and frequency of your liking. I provide an example to run it daily at 3 AM from within the script, but here it is for simpler copy and paste:0 3 * * * cd /path_where_hs_ss.sh_is; ./he_ss.sh >> ./he_ss.log 2>&1

You can run it manually by simply executing the script. I would actually advise you to do so, before assuming everything will run fine via crontab. I have created a script (test_commands.sh) to help you quickly determine if you have all the required commands working. Read the instruction within the he_ss.sh script and you should be alright. In the future this might become automatic.

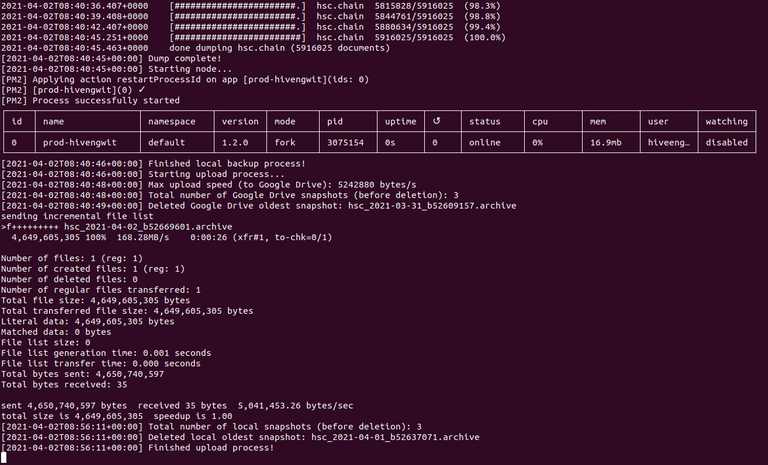

Another note above is the log (which I recommend using) from the output of the script. This will ensure you know what happened at 3 AM or whenever the script runs.

📰 Notes

During this progress, I found that the google-drive-ocamlfuse (GitHub repo) only starts uploading after the rsync finishes transferring all data (but does not exit yet). Then, only when the rsync returns (exit code 0), is when you can be sure the entire file was uploaded to Google Drive. This is actually very useful but tricky at the same time.

Within the rsync (and the --bwlimit flag) you can control the read speed that your VM will read the file (if you have lots of memory on your VM, this might even be all in cached memory), and then changing the variable max_upload_speed inside ~/.gdfuse/default/config (you need to unmount before running the script again to enable it) you will control the upload speed to Google Drive.

Although, what I found was that this is an average value, or at least seems to be according to the values of upload speed I saw on my tests. Setting a 5MB/s limit, for example, did indeed run through an average of 5MB/s, but during the entire process, the speeds varied several times under and above that limit. If you find a way to enforce this, I would love to hear it, as this is important to maintain and manage service levels.

👉 My Hive-Engine Snapshots Google Drive Share!

Now enabled to produce snapshots every day (at least for a while) while testing continues. Then, when you all have your new shared Google Drives, this will be reduced, to maybe once per week, as it would not make sense for everyone to run backups daily.

🗳 Vote for Hive-Engine Witnesses here!!!

If you like this work, consider supporting (@atexoras.witness) as a witness. I am going to continue this work not just as much as I am permitted to, but also because it's really great fun for a geek like me! 😋😎

Congratulations @atexoras! You have completed the following achievement on the Hive blockchain and have been rewarded with new badge(s) :

Your next target is to reach 20 posts.

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPCheck out the last post from @hivebuzz:

Post updated to include a mandatory fix and force the witness unregistration to avoid missed blocks.

Thanks for all the work you are putting into this. Really appreciated.

@tipu curate

Upvoted 👌 (Mana: 11/22) Liquid rewards.

Appreciate it mate. It has been hard, but we keep standing.

I dont know much about programing, I am just user of gadget. Bit I read your post and I try to get anything new for me. Thanks a lot for sharing woth us your great works. Regards from Indonesia.

I learned a lot in 24 hours many years ago... one thing was clear, you just need to keep the motivation, and strive!

Okee

The code you write is great and do programs.

It's just a simple bash script... but yeah, I like it.

Yes very nice.